Hello people…! In this post I will talk about the Binary Heap implemented using structures and not arrays. This is post is the successor to my previous post on Binary Heaps. Using arrays to code Binary Heaps is very comfortable for the programmer. But it is not fit for the day-to-day applications. This is because the Binary Heap is used to implement the Priority Queue and it is meant to grow, not stay of fixed size. But given that it is meant to grow, we cannot use STL Vector for this. This is because the Vector keeps reallocating memory as it grows. So, it is slow. Here we will learn the proper structure based implementation of the Binary Heaps.

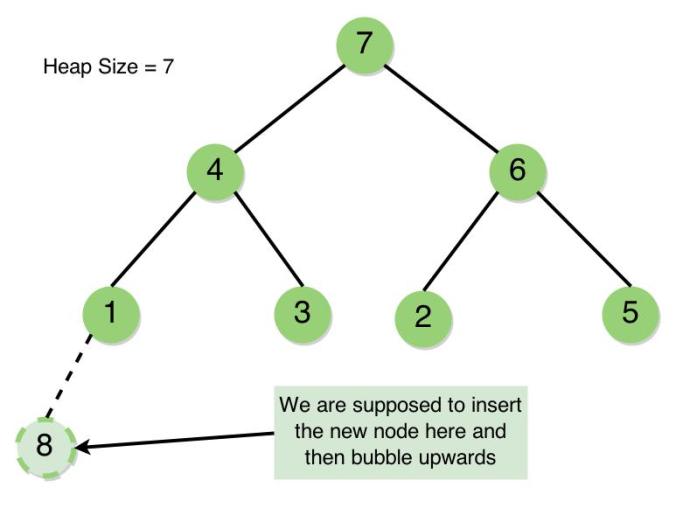

Now, currently we have the knowledge of the Binary Heap, its structure, working and other properties. But we implemented this using arrays. Now if we were to implement using a structure, there is one challenge that we will face. That is to ensure the Structural Property of the heap. Why? For insertion into an array, we created a node at the end which itself ensured the Structural Property is not violated and then we bubbled upwards to ensure that the Heap Property is not violated at any point. In the array, we knew exactly where we are supposed to insert the node. But in a tree coded using structures how will you find where to insert the new element such that the tree remains a complete binary tree..? The same goes when we want to delete the root. How do we know where the last node is. For this, we will use the binary representation of the size of the heap to traverse to the location we want.

Size does matter !

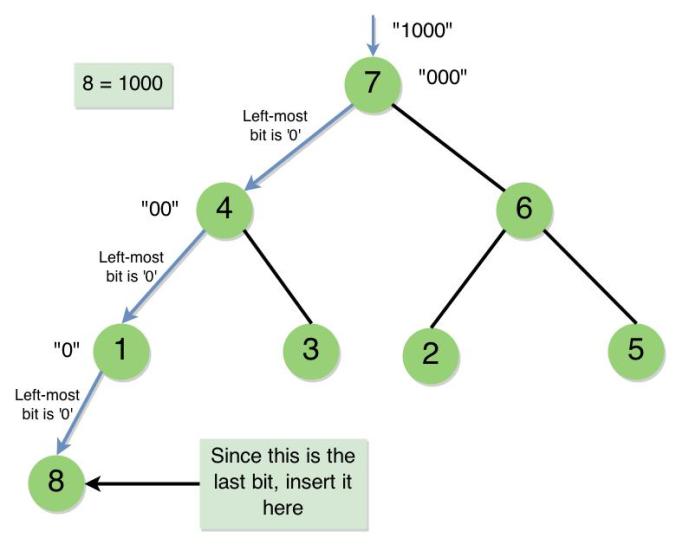

While inserting itself we could keep a track of the heap size. So, this takes constant time. While inserting, we must search for the appropriate location. For this, we will convert the size into its binary representation and begin the traversal from the root. How? Keep reading the bits of the binary representation you just generated, if you encounter ‘0’ go to the left child of the parent, if it is a ‘1’ go to the right child of the parent. I know it sounds really weird, but that’s the best thing we can do. Let’s take up an example to understand this better. Consider the Binary Heap below –

Now consider the binary representation of the number (HeapSize + 1) = 8, which is “1000”. We omit the first bit (left-to-right) because that bit says “come to the root”.

- The Binary String left is “000”. The leftmost bit is ‘0’. So, go to the Left Child. You come to Node 4.

- The Binary String left is “00”. The leftmost bit is ‘0’. So, go to the Left Child. You come to Node 1.

- The Binary String left is “0”. There is only one bit left. This bit doesn’t tell you to “go left” or “go right”, it tells you to “insert it to left” or “insert it to right”. As this bit is “0”, we place the new node as the left child of Node 1.

This is how you must use the binary representation of the (HeapSize + 1) to get to the location you want to insert the node. This is process is depicted in the sketch below –

Every time you insert a node, you will go all the way down the tree, you will not perform any insertions in the middle, so the traversal always takes O(log n), once inserted, you travel up, ensuring that the heap property holds good, so you have to travel all the way up again, so it is another O(log n) operation. So, the over all process takes O(log n), which is same as in the case of an array implementation.

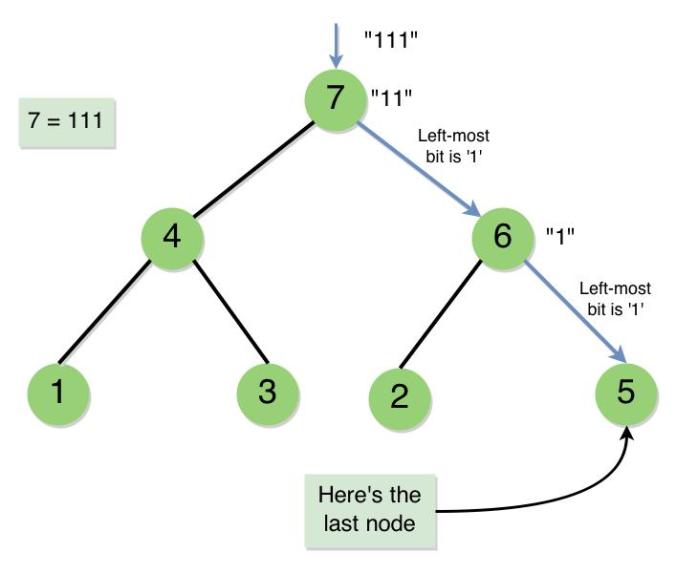

But for deletion, we are supposed to find something else. We are supposed to find out the last node. In the sketch above, before the insertion of 8, we are supposed to somehow find out that the last node is Node 5. There are a couple of methods by which we can accomplish this –

- BFS or DFS – By using the Breadth First Search or the Depth First Search we can find out which is the last node in the Binary Heap by searching the whole tree for a node at the maximum depth which is encountered as last as possible. The time complexity of BFS and DFS is O(|E| + |V|). We would have N vertices and what about the number the edges? We would have N – 1 edges, because every node is pointed by a child pointer, except the root. So, the overall complexity becomes O(N + N), which is O(N).

- Binary Traversal – This is the same traversal as we did in the case of Insertion. I just called it Binary Traversal. But the method which we discussed about gave us the Node to be inserted but not the last node. Can you think of a way of modifying the procedure such that it would give us the last node directly…? Think about this for a couple of minutes and you will figure out the simple logic behind it. For those who can’t, don’t worry I will discuss it, when I do, try to figure how silly a thing you missed…! The logic is simple, you used the binary representation of (HeapSize + 1) to get to the location where you were supposed to insert a node, if you use the binary representation of (HeapSize) itself for traversal, you will end up at the last node…! Try working out this on paper and you will realise the logic behind it. As, we would traverse the whole tree from root to leaf, this takes O(log N), which is way faster than BFS and DFS which was an O(N) operation. This kind of Binary Traversal is depicted in the sketch below –

The next thing that we must know about the structure implementation is that unlike the array implementation, where we could construct the heap using build heap procedure, we cannot do that here. The only way to construct the heap from a given collection of numbers is to keep inserting them one-by-one. As we discussed that in insertion, we would have to go all the way down and come up, inserting a node in the structure implementation will be slower than inserting into a heap implemented using arrays.

So, now let us plan what we would be writing as our code. First things first..! The structure of our nodes in the heap would be –

struct node

{

int value;

struct node * parent;

struct node * leftChild;

struct node * rightChild;

};

Apart from the standard variables that we see in many structure related codes, we would be needing the parent pointer here. This is because, without that, we cannot travel up the tree, which is needed in insertion and deletion.

Heap Sort – If you have understood the concepts of constructing the Binary Heap, finding the Last Node and Extracting maximum element, then Heap Sort becomes obvious. You just have to keep extracting the maximum element and store it in an array or a list. Then, you will have a sorted array. This essentially destroys the entire heap.

Take a break and come back to code the binary heap. If you have already coded the binary search tree or a binary tree before this should be relatively easy for you. If you have not, then this will be challenging, and I’m sure you’ll be proud of your efforts once you have successfully coded this data structure. You will spend 80% of the time debugging your code, and trust me that’s the way programming is done. I can’t discuss the issues that you might encounter while coding but you can always comment them if you need help. Once you are able to code Insertion and Deletion, Heap Sort should be easy. If you get stuck, you can always refer to my code below –

/*

* Binary Heap using Structures

* and Heap Sort Algorithm

*

* Authored by,

* Vamsi Sangam

*/

#include <cstdio>

#include <cstdlib>

#include <list>

using namespace std;

struct node

{

int value;

struct node * parent;

struct node * leftChild;

struct node * rightChild;

};

// Traverses the Heap top-to-bottom once and returns the location

// of the last node. Works on the priciple of traversing a

// Binary Tree by the binary representation of its size.

struct node * findLastNode(struct node * BinaryHeap, int size)

{

list<int> Stack;

// Getting the binary

// representation of the size

while (size != 0) {

if (size & 1) {

Stack.push_front(true);

} else {

Stack.push_front(false);

}

size = size >> 1;

}

// Omit the first bit

Stack.pop_front();

struct node * traverse = BinaryHeap;

bool temp;

while (Stack.size() != 0) {

temp = Stack.front();

Stack.pop_front();

if (temp) {

// encountered 1

// go to rightChild

traverse = traverse->rightChild;

} else {

// encountered 0

// go to leftChild

traverse = traverse->leftChild;

}

}

return traverse;

}

// This makes the neccessary adjustments

// after insertion to ensure the validity

// of Heap Property in the Binary Heap

void bubbleUp(struct node * heap)

{

if (heap->parent == NULL) {

return;

}

if (heap->parent->value < heap->value) {

// Swap child and parent

int temp = heap->value;

heap->value = heap->parent->value;

heap->parent->value = temp;

bubbleUp(heap->parent);

}

}

// The recursive procedure which goes top-to-bottom from the root to the place of

// insertion and inserts the new node with a value 'Value'. Uses binary traversal.

struct node * insertUtil(struct node * BinaryHeap, int value, list<bool> * Stack)

{

bool temp;

if ((*Stack).size() == 1) {

temp = (*Stack).front();

(*Stack).pop_front();

if (temp) {

// create new node as the right child

BinaryHeap->rightChild = (struct node *) calloc(1, sizeof(struct node));

BinaryHeap->rightChild->parent = BinaryHeap;

BinaryHeap = BinaryHeap->rightChild;

BinaryHeap->value = value;

} else {

// create new node as the left child

BinaryHeap->leftChild = (struct node *) calloc(1, sizeof(struct node));

BinaryHeap->leftChild->parent = BinaryHeap;

BinaryHeap = BinaryHeap->leftChild;

BinaryHeap->value = value;

}

} else {

temp = (*Stack).front();

(*Stack).pop_front();

if (temp) {

// encountered 1

// go to rightChild

BinaryHeap = insertUtil(BinaryHeap->rightChild, value, Stack);

} else {

// encountered 0

// go to leftChild

BinaryHeap = insertUtil(BinaryHeap->leftChild, value, Stack);

}

}

return BinaryHeap;

}

// Inserts a new node into the binary heap and also makes

// the neccessary swaps to ensure Heap Property isn't violated

void insertIntoHeap(struct node * BinaryHeap, int value, list<bool> * Stack)

{

(*Stack).pop_front(); // Omit the first bit

// Inserting into Heap and keeping a

// track of the newly inserted node

struct node * newNode = insertUtil(BinaryHeap, value, Stack);

// Bubble the new node up the

// Heap to ensure validity of

// the Heap Property

bubbleUp(newNode);

}

// Allocates memory for the Binary

// Heap thus creates the root node

struct node * getBinaryHeap(int value)

{

struct node * BinaryHeap = (struct node *) calloc(1, sizeof(struct node));

BinaryHeap->value = value;

return BinaryHeap;

}

// A simple utility function

// to aid In-Order Walk

void print(struct node * BinaryHeap)

{

printf("%d ", BinaryHeap->value);

}

// Prints the nodes of the Binary

// Heap in the In-Order fashion

void inOrderTraversal(struct node * BinaryHeap)

{

if (BinaryHeap == NULL) {

return;

}

inOrderTraversal(BinaryHeap->leftChild);

print(BinaryHeap);

inOrderTraversal(BinaryHeap->rightChild);

}

// The standard recursive Heapify procedure to

// convert a partial heap to a complete heap

// Mainly used by Extract-Max procedure

void heapify(struct node * BinaryHeap)

{

if (BinaryHeap->leftChild != NULL && BinaryHeap->rightChild != NULL) {

// both children exist

if (BinaryHeap->value < BinaryHeap->leftChild->value && BinaryHeap->value < BinaryHeap->rightChild->value) {

if (BinaryHeap->leftChild->value > BinaryHeap->rightChild->value) {

// leftChild is greater

int temp = BinaryHeap->value;

BinaryHeap->value = BinaryHeap->leftChild->value;

BinaryHeap->leftChild->value = temp;

heapify(BinaryHeap->leftChild);

} else {

// rightChild is greater

int temp = BinaryHeap->value;

BinaryHeap->value = BinaryHeap->rightChild->value;

BinaryHeap->rightChild->value = temp;

heapify(BinaryHeap->rightChild);

}

} else if (BinaryHeap->value < BinaryHeap->leftChild->value && BinaryHeap->value > BinaryHeap->rightChild->value) {

// leftChild is greater & rightChild is smaller than parent

int temp = BinaryHeap->value;

BinaryHeap->value = BinaryHeap->leftChild->value;

BinaryHeap->leftChild->value = temp;

heapify(BinaryHeap->leftChild);

} else if (BinaryHeap->value > BinaryHeap->leftChild->value && BinaryHeap->value < BinaryHeap->rightChild->value) {

// rightChild is greater & leftChild is smaller than parent

int temp = BinaryHeap->value;

BinaryHeap->value = BinaryHeap->rightChild->value;

BinaryHeap->rightChild->value = temp;

heapify(BinaryHeap->rightChild);

}

} else if (BinaryHeap->rightChild == NULL && BinaryHeap->leftChild != NULL) {

// Only the leftChild exists

if (BinaryHeap->leftChild->value > BinaryHeap->value) {

// The existing leftChild is greater than parent

// So swap

int temp = BinaryHeap->leftChild->value;

BinaryHeap->leftChild->value = BinaryHeap->value;

BinaryHeap->value = temp;

}

// When we reach here, logically speaking, we have come

// to the last node in the Binary Heap, so after this

// we simply return from the function

}

}

// Returns the root value and deletes it by swapping the root

// node and the last node and then calling heapify on root node

int extractMax(struct node * BinaryHeap, struct node * lastNode)

{

if (BinaryHeap == NULL) {

return -1;

}

if (BinaryHeap == lastNode) {

// A corner case where only

// one node exists

int max = BinaryHeap->value;

free(BinaryHeap);

return max;

}

int max = BinaryHeap->value;

// Swap root node and last node

int temp = BinaryHeap->value;

BinaryHeap->value = lastNode->value;

lastNode->value = temp;

// Making its parent point to NULL appropriately

struct node * parent = lastNode->parent;

if (parent->leftChild == lastNode) {

parent->leftChild = NULL;

} else {

parent->rightChild = NULL;

}

// Deleting the lastNode

free(lastNode);

heapify(BinaryHeap); // making adjustments

return max;

}

// Heap Sort procedure which takes and empyt list 'Sorted List'

// and fills it with the elements in sorted order. Keeps extracting

// the maximum element from the Max Heap until it is empty

void heapSort(struct node * BinaryHeap, list<int> * SortedList, int HeapSize)

{

struct node * lastNode;

list<int>::iterator itr;

while (HeapSize != 0) {

//getting the lastNode to assist extractMax procedure

lastNode = findLastNode(BinaryHeap, HeapSize);

(*SortedList).push_front(extractMax(BinaryHeap, lastNode));

--HeapSize;

}

}

int main()

{

int n;

printf("Enter the size of input -\n");

scanf("%d", &n);

int temp, i, j, HeapSize = 0;

struct node * BinaryHeap;

list<bool> Stack;

printf("\nEnter %d integers -\n", n);

for (i = 0; i < n; ++i) {

scanf("%d", &temp);

++HeapSize;

if (i == 0) {

BinaryHeap = getBinaryHeap(temp);

continue;

}

// Clears the Stack before filling it with

// the Binary Representation of HeapSize

Stack.clear();

j = HeapSize;

while (j != 0) {

if (j & 1) {

Stack.push_front(true);

} else {

Stack.push_front(false);

}

j = j >> 1;

}

insertIntoHeap(BinaryHeap, temp, &Stack);

}

printf("\nIn Order Traversal - ");

inOrderTraversal(BinaryHeap);

printf("\n");

list<int> SortedList;

heapSort(BinaryHeap, &SortedList, HeapSize);

BinaryHeap = NULL;

list<int>::iterator itr = SortedList.begin();

printf("\nElements in Sorted order - ");

while (itr != SortedList.end()) {

printf("%d ", *itr);

++itr;

}

printf("\n");

return 0;

}

The code is big and that is how it should be and moreover, it is well commented with information. If you still have doubts regarding the code or any concept, feel free to comment them. I hope my post has helped you to get a complete idea about the Binary Heap. If it did, let me know by commenting…! Keep practising… Happy Coding…! 🙂